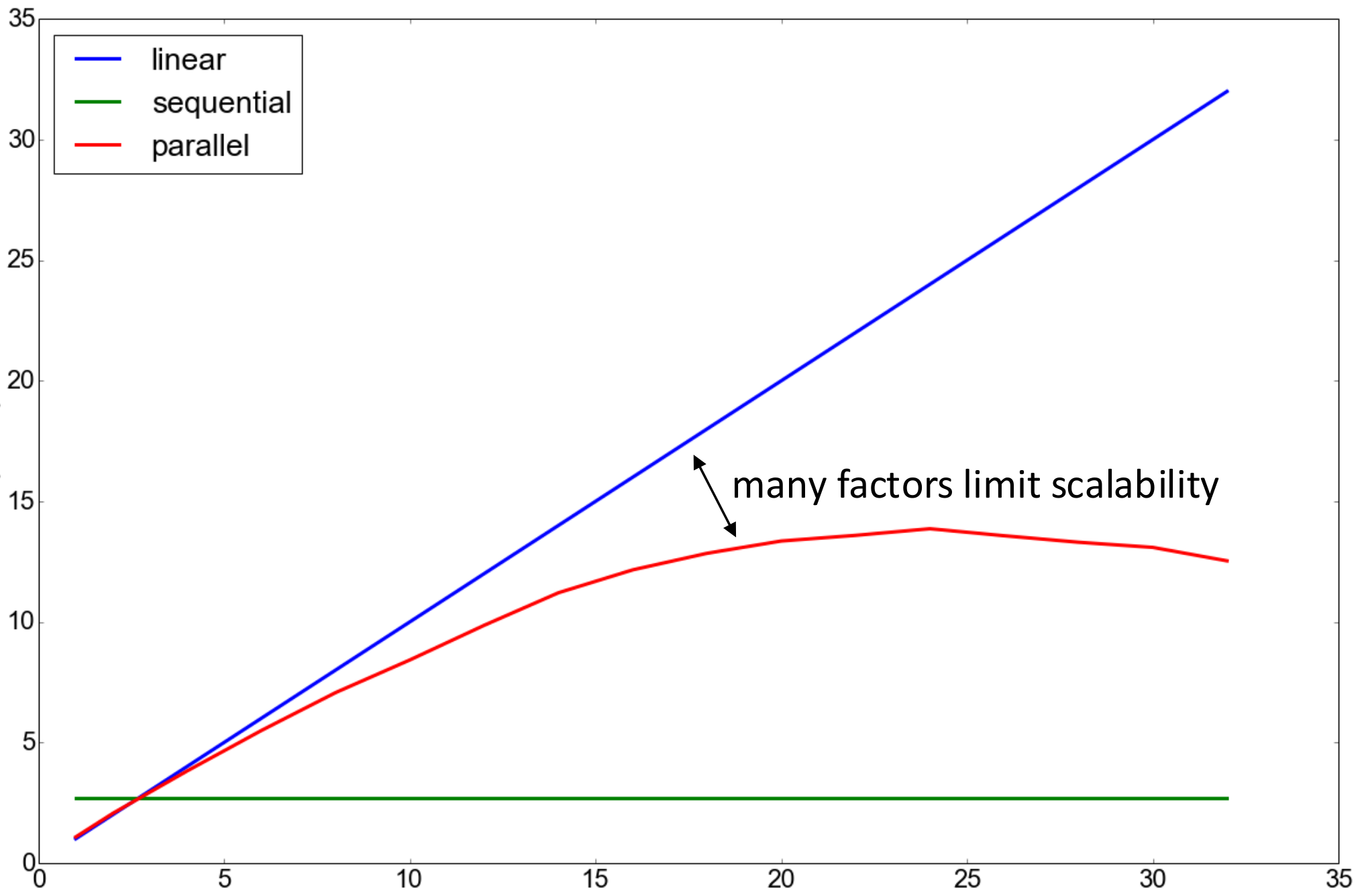

There are fundamental issues with throwing more cores at a problem.

- The remaining sequential parts will dominate runtime

- Choose the right data-structures for parallel access

- synchronisation and switching overheads

This results in non-linear speedup.

Cache

There are multiple levels of cache in a CPU. The closer (and usually smaller), the faster access time is.

This is where locality counts in programs (row vs. column major access). In a program, summing from an array with a stride of 64 will consistently produce cache-busts.

Measuring Performance

Parallel Performance

Sequential execution time

- (perfection)

- (performance loss, normal situation)

- (magic)

Parallel Speedup

The speedup for cores

- linear speedup (perfection)

- sub-linear speedup

- super-linear speedup

Efficiency

, how well utilised each core is.

Speedup Calculations

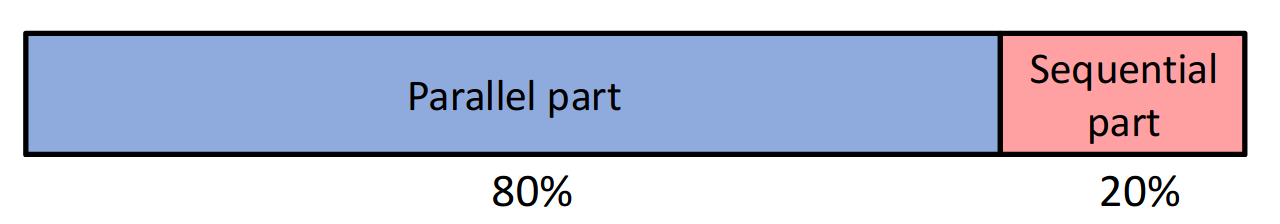

Calculate the Speedup of a program with the following distribution:

Identify the sequential and parallel fractions of your program

Calculate parallel execution time on cores for this. The speedup is then: , where efficiency is .

Laws of Parallel Execution Time

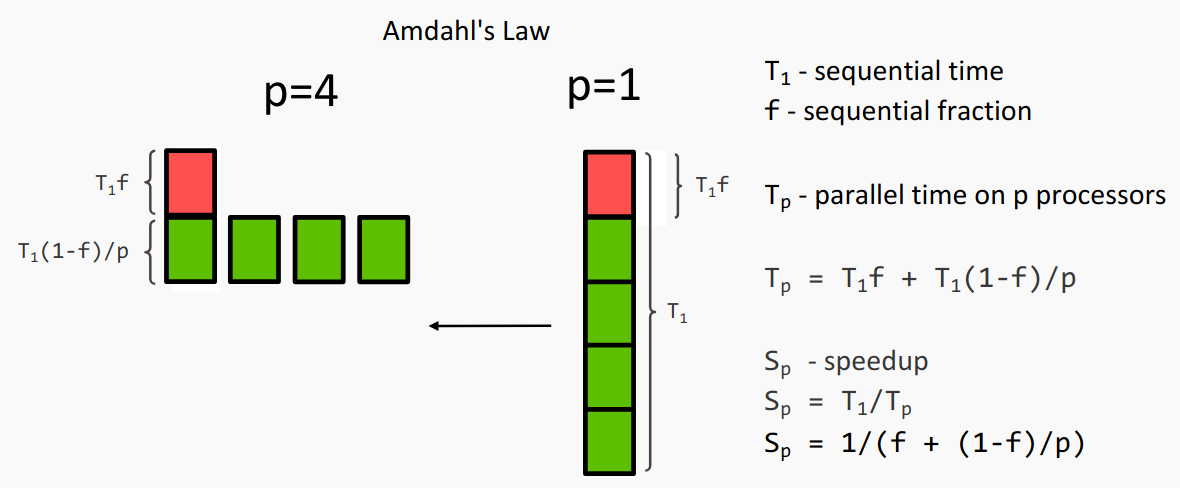

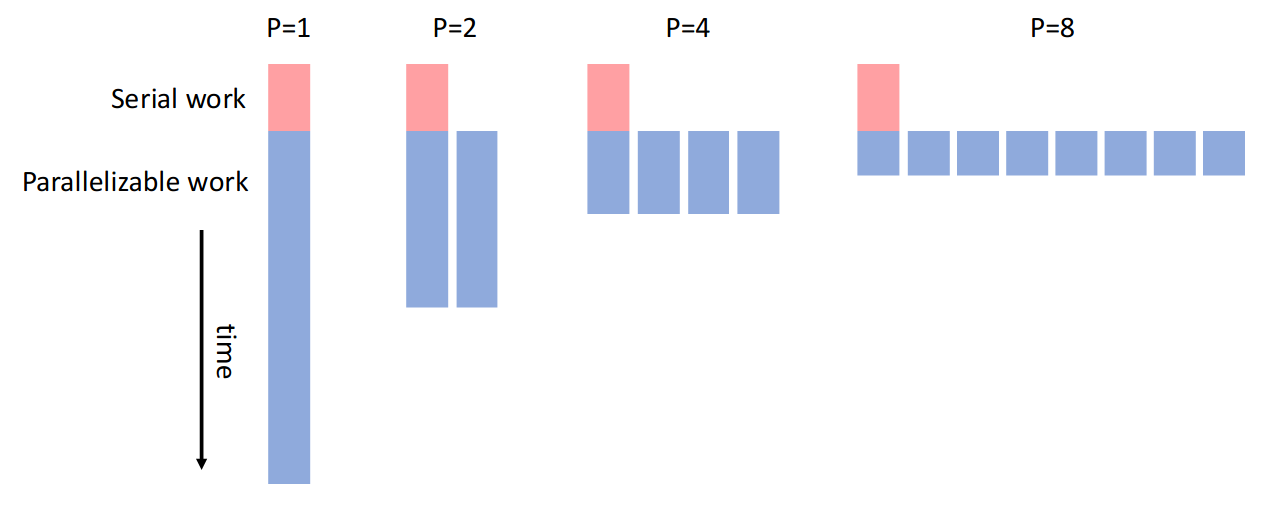

Amdahl’s Law

The execution time of a sequential program is either:

- parallelisable:

- non-parallelisable serial work

Given workers available, the time for sequential and parallel execution are:

and this bounds execution time to

Amdahl's Law

Parallelisable fraction

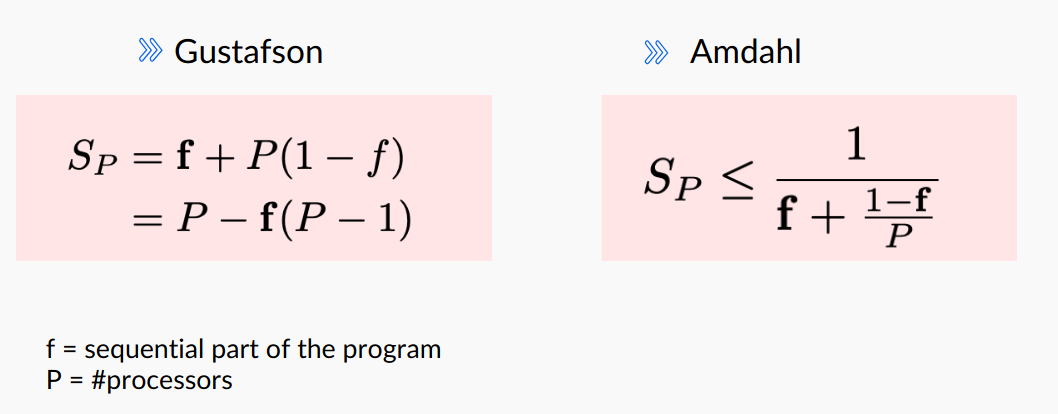

If ist the fraction of non-parallelisable work and which gives

Proof:

- The speedup is .

- is simply the time it takes to do the serial fraction, then the parallel one

- has the same amount of time for the serial fraction, but we divide the parallel fraction by

- We can then remove the and get the speedup bound.

Note, if we have infinite workers, .

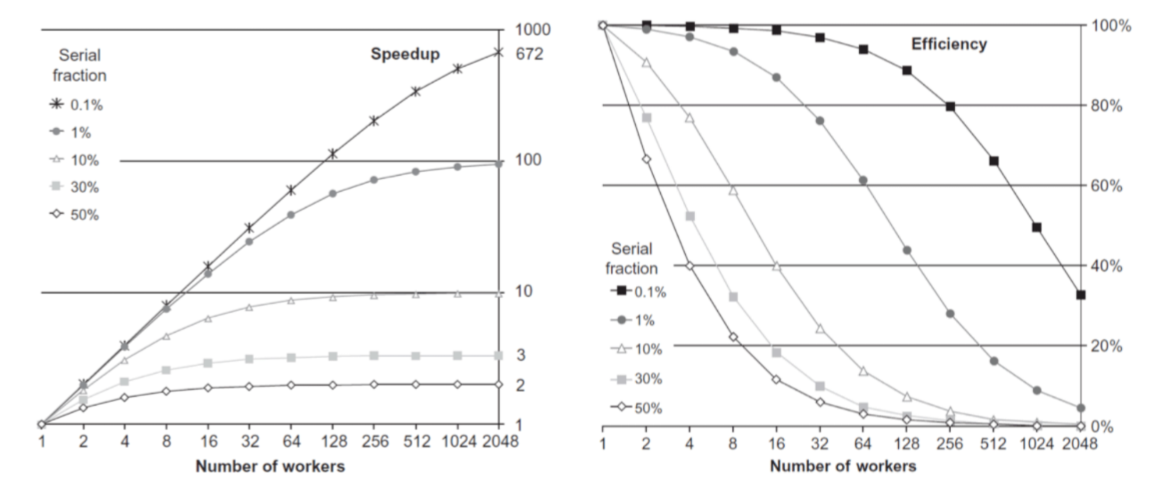

If we compare the speed-up and efficiency of a workload, depending on the serial fraction, we can see gains quickly flatten off.

Amdahl’s Law is a pessimist approach - it puts limits on scalability. All non-parallel parts of a program can cause problems.

The effort to reduce the fraction of non-parallel code pays off in large performance gains!

One has to weigh the trade-off between increasing ressources and actual performance gain.

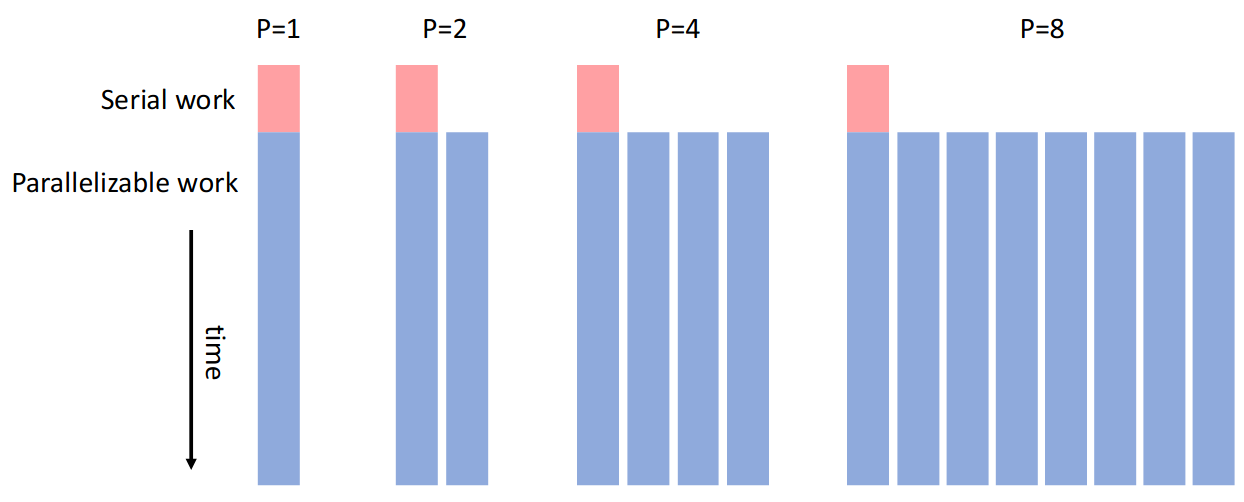

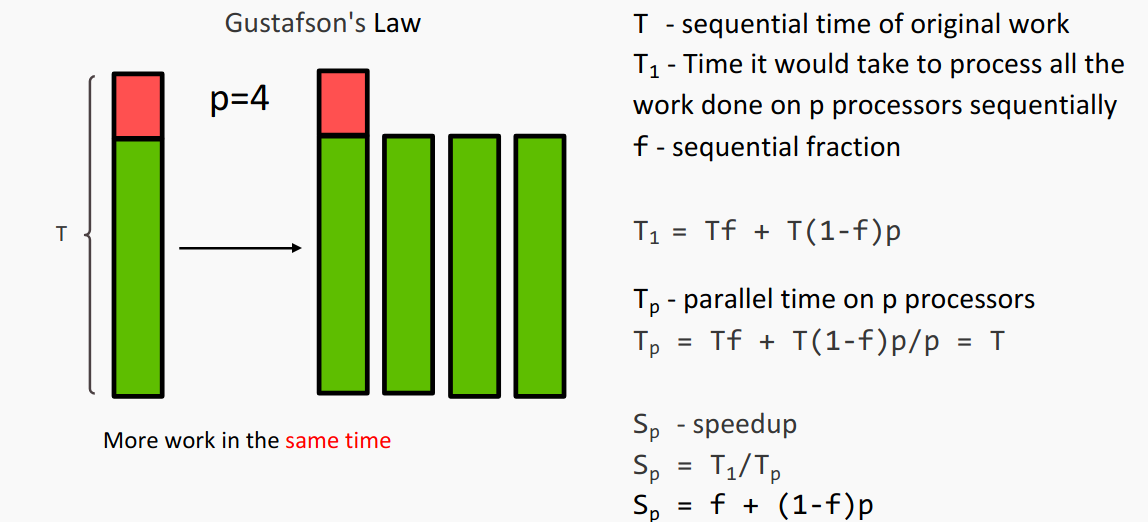

Gustafson’s Law

Gustafson’s Law is the optimistic version. It considers problem size (where run-time is constant). More processors allow larger problems to be solved.

Gustafson's Law

and

Proof:

- for Gustafson’s we determine the sequential work as the total parallel work, just done sequentially

- - we basically stack the amount we could have done in parallel

- Then the parallel time is just

- Then the speedup shortens that away and just gives us as we have to do the sequential fraction once, then we do for as many processors as we want.

Summary

Amdahl’s and Gustafson’s aren’t different views on the same problem. They make different assumptions (fixed work or fixed time).