6.1 Reductions and Maps

Standard patterns.

Reduction always achieves parallel time.

Map achieves (using divide and conquer).

Here, datastructures matter. Implementing a map or a reduction on a LinkedList incurs a lot of overhead, since we need to iterate over all elements in the array before the target.

Using a tree is beneficial (trees are as flexible as lists, if we don’t know the size in advance). This gets us indexing instead of iterating through list.

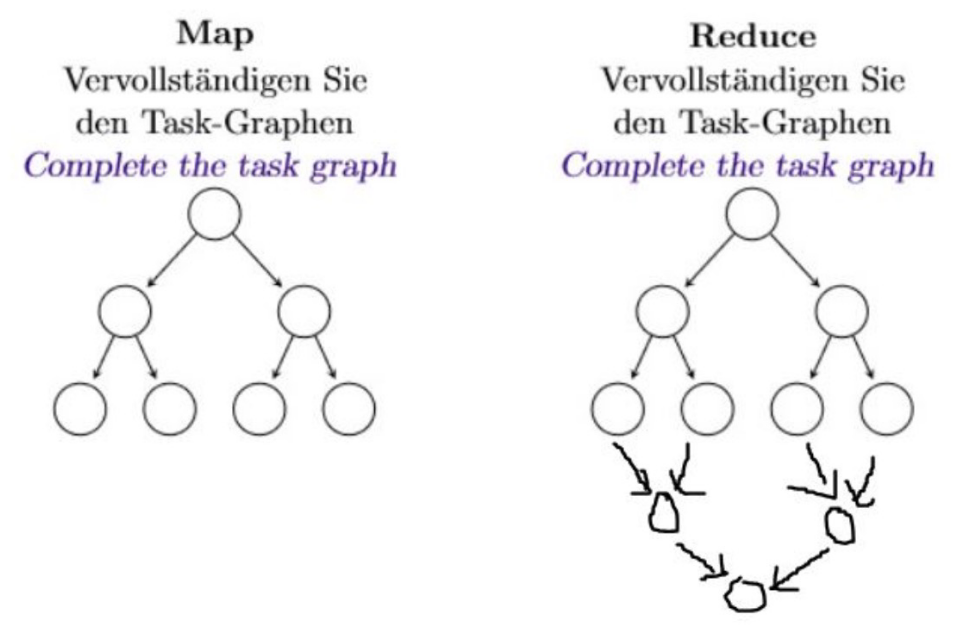

Task-Graphs:

With map we don’t coalesce the results together at the bottom. With reduce we have to as we output a single output.

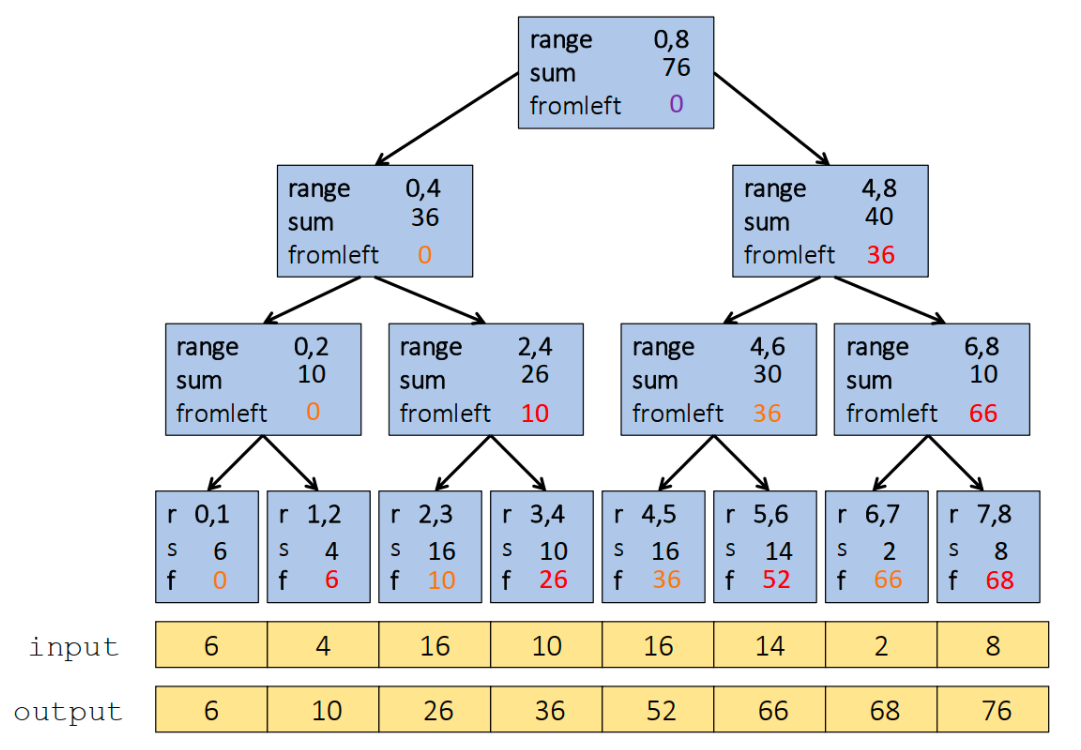

6.2 Prefix Sum

- Up: Build a binary tree

- Root has sum of the range

- If a node has sum of and ,

- Left child has sum of

- Right child has sum of

- A leaf has sum of , i.e.,

This is an easy fork-join computation: combine results by actually building a binary tree with all the range-sums. The Tree is built bottom-up in parallel.

Analysis: work, span

- Down: Pass down a value

fromLeft- Root given a of

- Node takes its value and

- Passes its left child the same

- Passes its right child its plus its left child’s (as stored in part 1)

- At the leaf for array position :

This is an easy fork-join computation: traverse the tree built in step 1 and produce no result.

- Leaves assign to

- Invariant: is sum of elements left of the node’s range

Analysis: work, span

6.3 Pack

Pack is built on top of prefix sum.

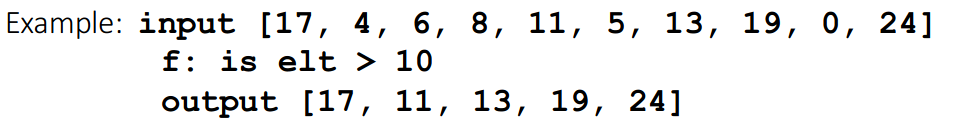

Example Given an array input, produce an array output containing only elements such that f(element) = true (so with a filter / condition).

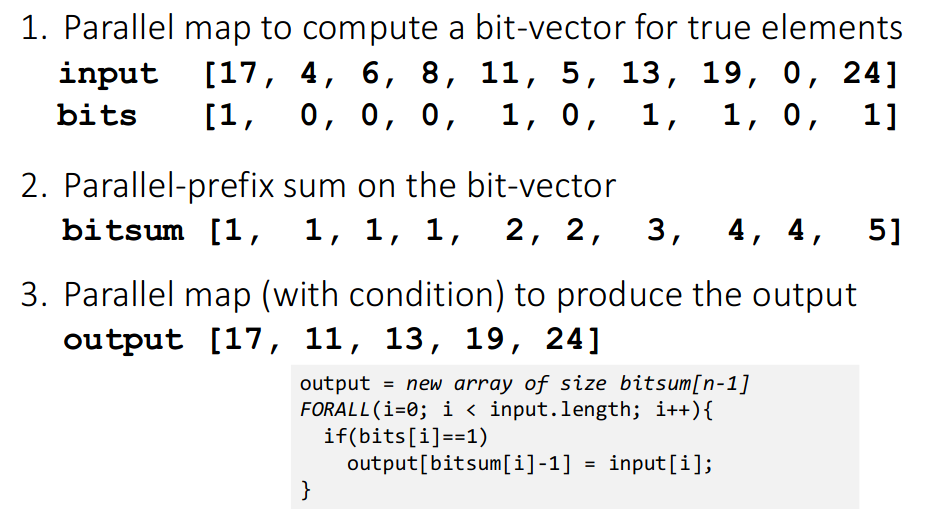

We execute the following steps to get our result:

Note that:

- the first two steps can be combined into one pass

- just different base case for prefix sum (if

f(element)increase by one) - Has no effect on asymptotic complexity though

- just different base case for prefix sum (if

- Can also combine third step into down pass of prefix sum

Analysis work, span.

Parallel pack is the basis for parallel quicksort. We just pack on values bigger than the pivot and then smaller and rearrange.